[ad_1]

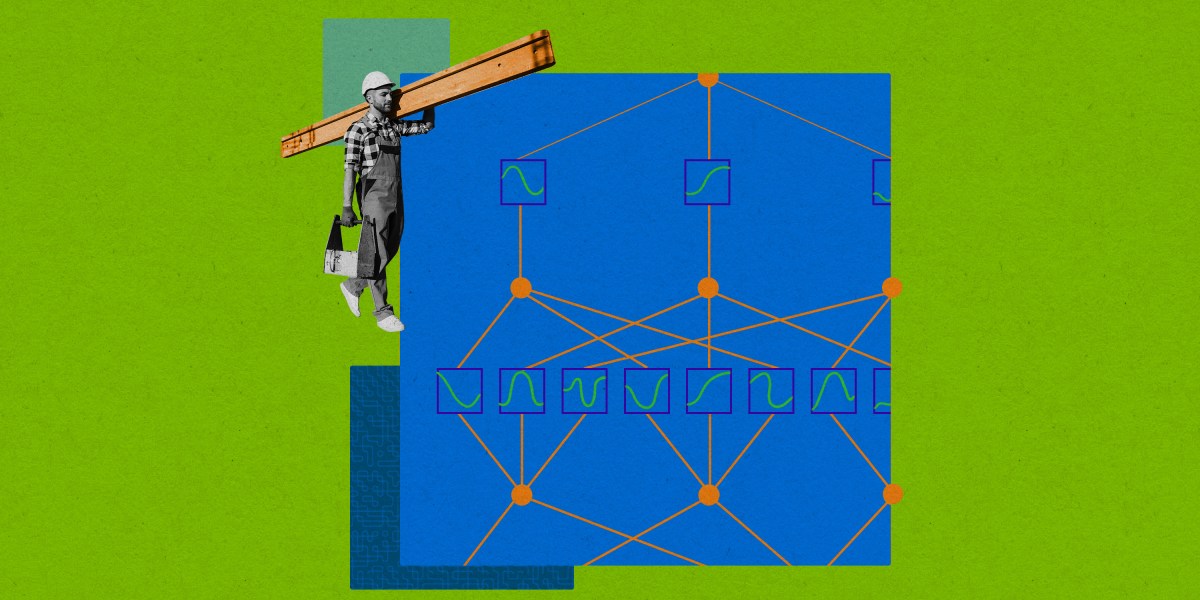

The simplification, studied intimately by a bunch led by researchers at MIT, may make it simpler to grasp why neural networks produce sure outputs, assist confirm their selections, and even probe for bias. Preliminary proof additionally means that as KANs are made larger, their accuracy will increase quicker than networks constructed of conventional neurons.

“It is fascinating work,” says Andrew Wilson, who research the foundations of machine studying at New York College. “It is good that persons are making an attempt to basically rethink the design of those [networks].”

The fundamental parts of KANs have been really proposed within the Nineteen Nineties, and researchers saved constructing easy variations of such networks. However the MIT-led group has taken the thought additional, exhibiting how you can construct and practice larger KANs, performing empirical exams on them, and analyzing some KANs to reveal how their problem-solving potential could possibly be interpreted by people. “We revitalized this concept,” mentioned group member Ziming Liu, a PhD scholar in Max Tegmark’s lab at MIT. “And, hopefully, with the interpretability… we [may] not [have to] assume neural networks are black containers.”

Whereas it is nonetheless early days, the group’s work on KANs is attracting consideration. GitHub pages have sprung up that present how you can use KANs for myriad functions, akin to picture recognition and fixing fluid dynamics issues.

Discovering the system

The present advance got here when Liu and colleagues at MIT, Caltech, and different institutes have been making an attempt to grasp the internal workings of ordinary synthetic neural networks.

At present, virtually all forms of AI, together with these used to construct giant language fashions and picture recognition programs, embrace sub-networks often known as a multilayer perceptron (MLP). In an MLP, synthetic neurons are organized in dense, interconnected “layers.” Every neuron has inside it one thing referred to as an “activation operate”—a mathematical operation that takes in a bunch of inputs and transforms them in some pre-specified method into an output.

[ad_2]