[ad_1]

Diffusion fashions have just lately emerged because the de facto normal for producing complicated, high-dimensional outputs. It’s possible you’ll know them for his or her capacity to supply gorgeous AI artwork and hyper-realistic artificial photos, however they’ve additionally discovered success in different functions equivalent to drug design and steady management. The important thing concept behind diffusion fashions is to iteratively remodel random noise right into a pattern, equivalent to a picture or protein construction. That is sometimes motivated as a most chance estimation downside, the place the mannequin is educated to generate samples that match the coaching knowledge as carefully as doable.

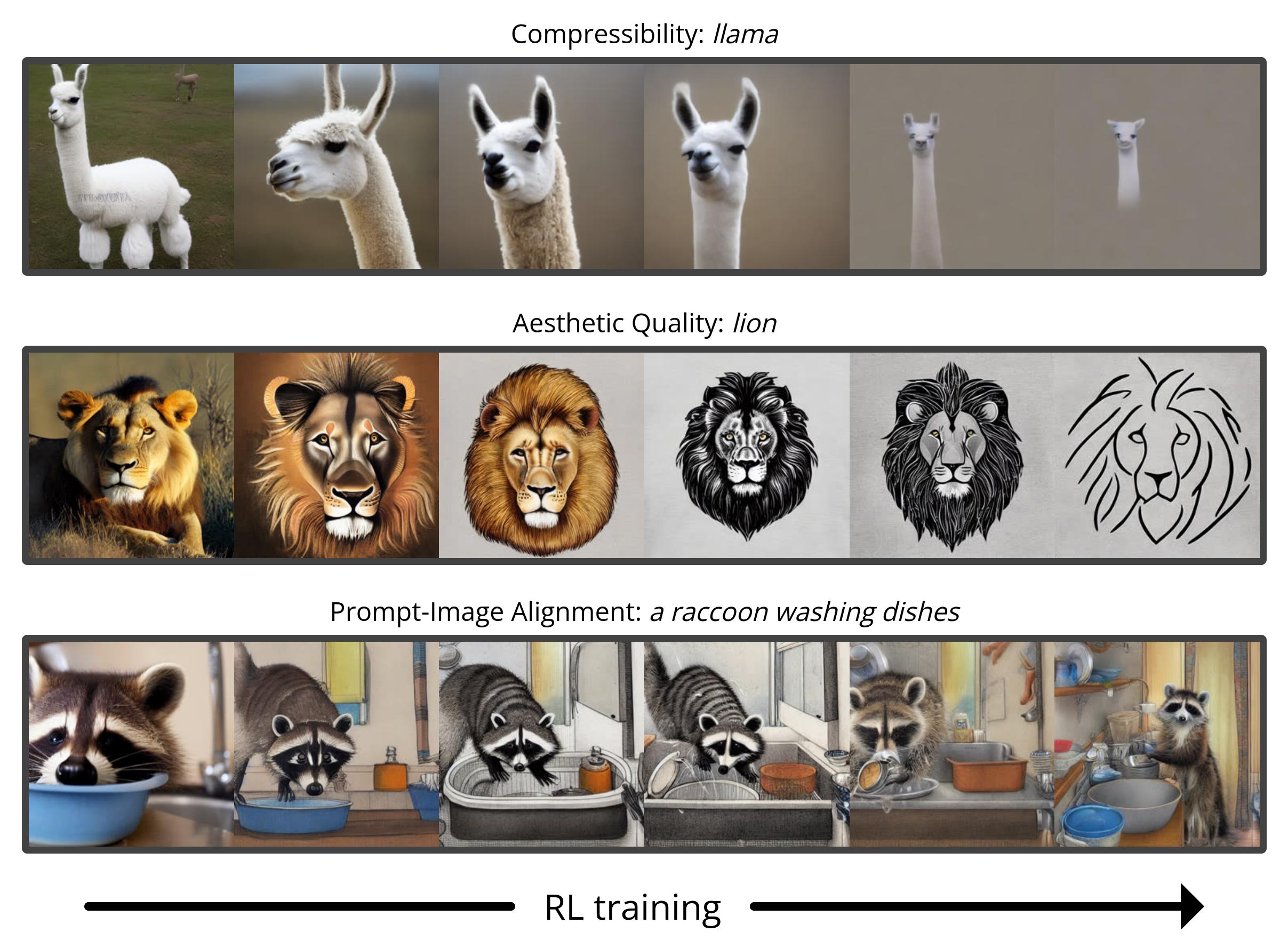

Nevertheless, most use instances of diffusion fashions usually are not straight involved with matching the coaching knowledge, however as a substitute with a downstream goal. We don’t simply need a picture that appears like current photos, however one which has a selected kind of look; we don’t simply desire a drug molecule that’s bodily believable, however one that’s as efficient as doable. On this publish, we present how diffusion fashions may be educated on these downstream targets straight utilizing reinforcement studying (RL). To do that, we finetune Steady Diffusion on quite a lot of targets, together with picture compressibility, human-perceived aesthetic high quality, and prompt-image alignment. The final of those targets makes use of suggestions from a big vision-language mannequin to enhance the mannequin’s efficiency on uncommon prompts, demonstrating how highly effective AI fashions can be utilized to enhance one another with none people within the loop.

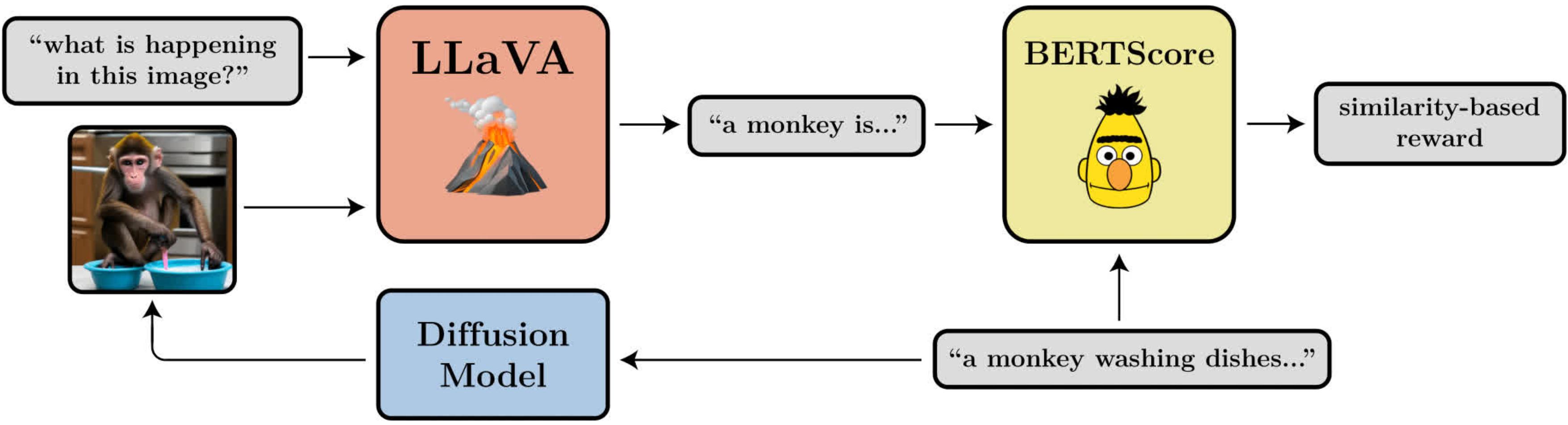

A diagram illustrating the prompt-image alignment goal. It makes use of LLaVA, a big vision-language mannequin, to guage generated photos.

Denoising Diffusion Coverage Optimization

When turning diffusion into an RL downside, we make solely essentially the most fundamental assumption: given a pattern (e.g. a picture), we now have entry to a reward perform that we are able to consider to inform us how “good” that pattern is. Our aim is for the diffusion mannequin to generate samples that maximize this reward perform.

Diffusion fashions are sometimes educated utilizing a loss perform derived from most chance estimation (MLE), which means they’re inspired to generate samples that make the coaching knowledge look extra seemingly. Within the RL setting, we now not have coaching knowledge, solely samples from the diffusion mannequin and their related rewards. A technique we are able to nonetheless use the identical MLE-motivated loss perform is by treating the samples as coaching knowledge and incorporating the rewards by weighting the loss for every pattern by its reward. This provides us an algorithm that we name reward-weighted regression (RWR), after current algorithms from RL literature.

Nevertheless, there are a number of issues with this method. One is that RWR just isn’t a very precise algorithm — it maximizes the reward solely roughly (see Nair et. al., Appendix A). The MLE-inspired loss for diffusion can be not precise and is as a substitute derived utilizing a variational certain on the true chance of every pattern. Which means that RWR maximizes the reward by two ranges of approximation, which we discover considerably hurts its efficiency.

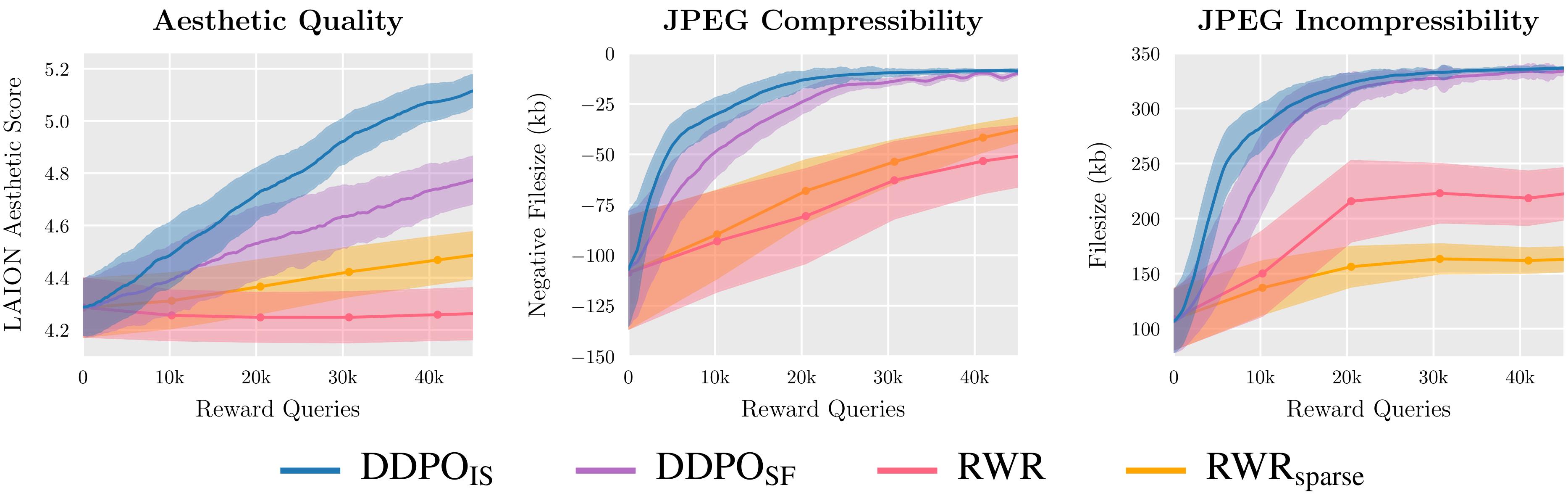

We consider two variants of DDPO and two variants of RWR on three reward features and discover that DDPO persistently achieves the most effective efficiency.

The important thing perception of our algorithm, which we name denoising diffusion coverage optimization (DDPO), is that we are able to higher maximize the reward of the ultimate pattern if we take note of your entire sequence of denoising steps that received us there. To do that, we reframe the diffusion course of as a multi-step Markov choice course of (MDP). In MDP terminology: every denoising step is an motion, and the agent solely will get a reward on the ultimate step of every denoising trajectory when the ultimate pattern is produced. This framework permits us to use many highly effective algorithms from RL literature which can be designed particularly for multi-step MDPs. As a substitute of utilizing the approximate chance of the ultimate pattern, these algorithms use the precise chance of every denoising step, which is extraordinarily simple to compute.

We selected to use coverage gradient algorithms as a consequence of their ease of implementation and previous success in language mannequin finetuning. This led to 2 variants of DDPO: DDPOSF, which makes use of the straightforward rating perform estimator of the coverage gradient also called REINFORCE; and DDPOIS, which makes use of a extra highly effective significance sampled estimator. DDPOIS is our best-performing algorithm and its implementation carefully follows that of proximal coverage optimization (PPO).

Finetuning Steady Diffusion Utilizing DDPO

For our foremost outcomes, we finetune Steady Diffusion v1-4 utilizing DDPOIS. We’ve 4 duties, every outlined by a distinct reward perform:

- Compressibility: How simple is the picture to compress utilizing the JPEG algorithm? The reward is the unfavorable file dimension of the picture (in kB) when saved as a JPEG.

- Incompressibility: How arduous is the picture to compress utilizing the JPEG algorithm? The reward is the constructive file dimension of the picture (in kB) when saved as a JPEG.

- Aesthetic High quality: How aesthetically interesting is the picture to the human eye? The reward is the output of the LAION aesthetic predictor, which is a neural community educated on human preferences.

- Immediate-Picture Alignment: How effectively does the picture symbolize what was requested for within the immediate? This one is a little more difficult: we feed the picture into LLaVA, ask it to explain the picture, after which compute the similarity between that description and the unique immediate utilizing BERTScore.

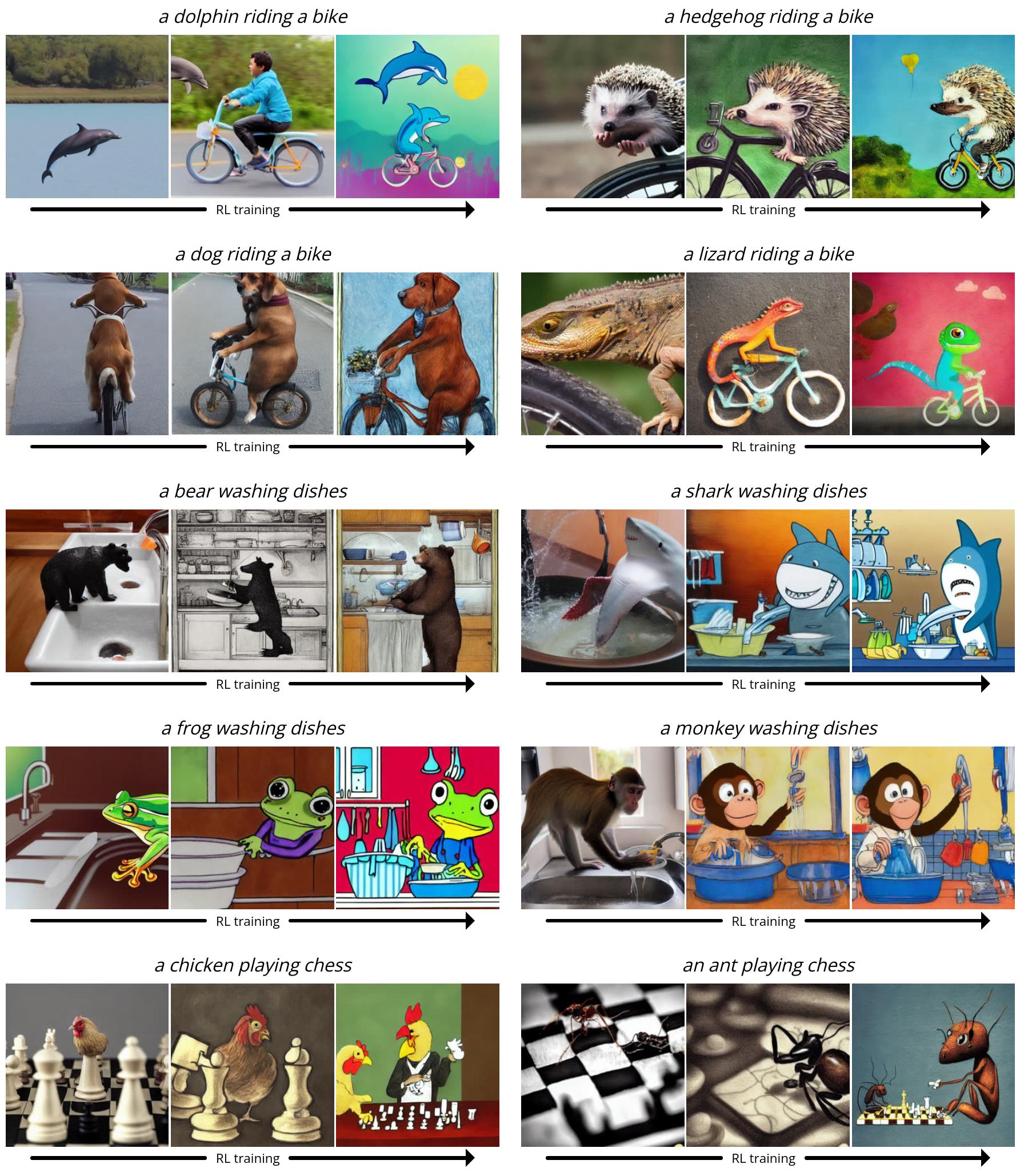

Since Steady Diffusion is a text-to-image mannequin, we additionally want to choose a set of prompts to offer it throughout finetuning. For the primary three duties, we use easy prompts of the shape “a(n) [animal]”. For prompt-image alignment, we use prompts of the shape “a(n) [animal] [activity]”, the place the actions are “washing dishes”, “taking part in chess”, and “using a motorcycle”. We discovered that Steady Diffusion usually struggled to supply photos that matched the immediate for these uncommon eventualities, leaving loads of room for enchancment with RL finetuning.

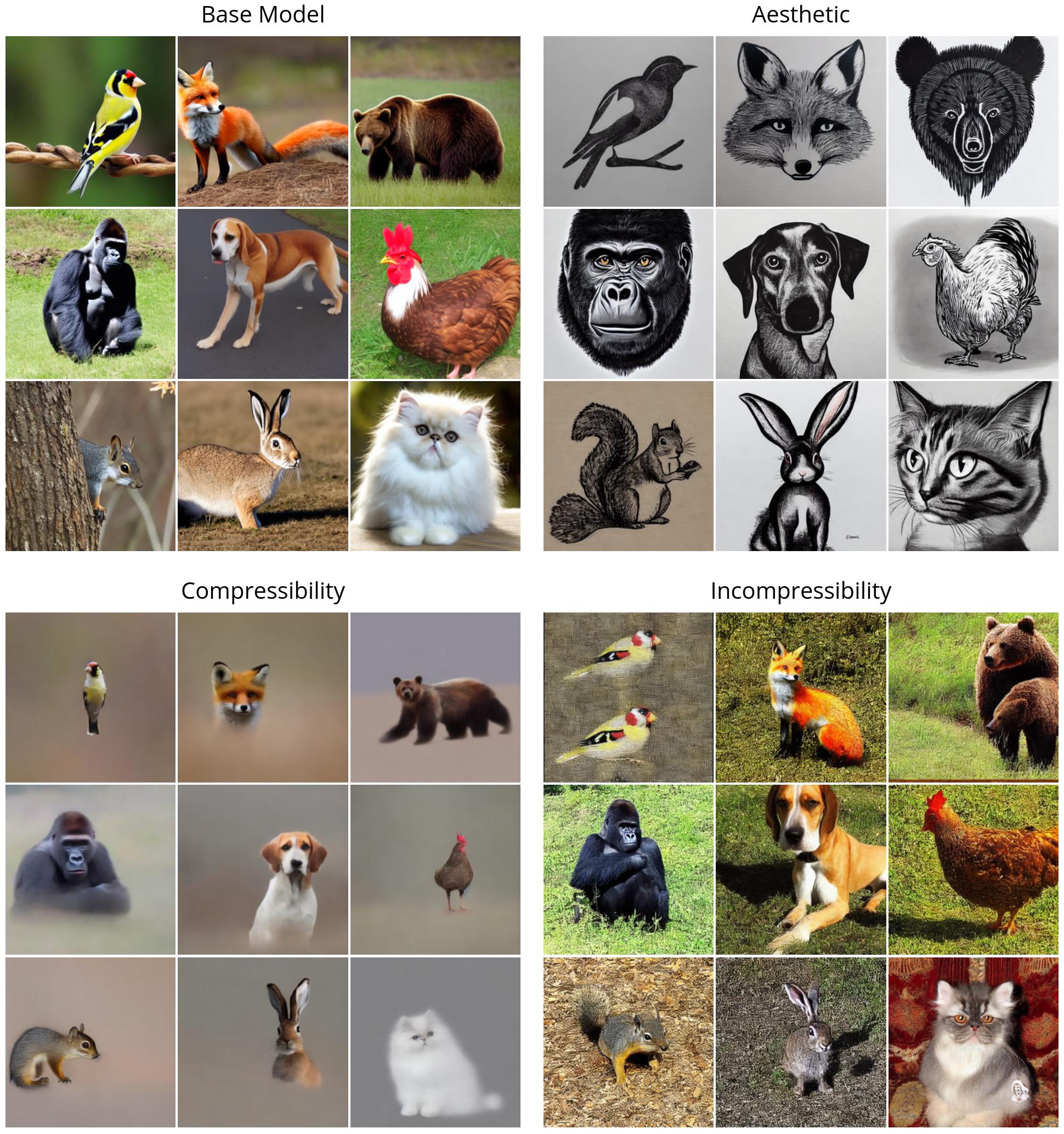

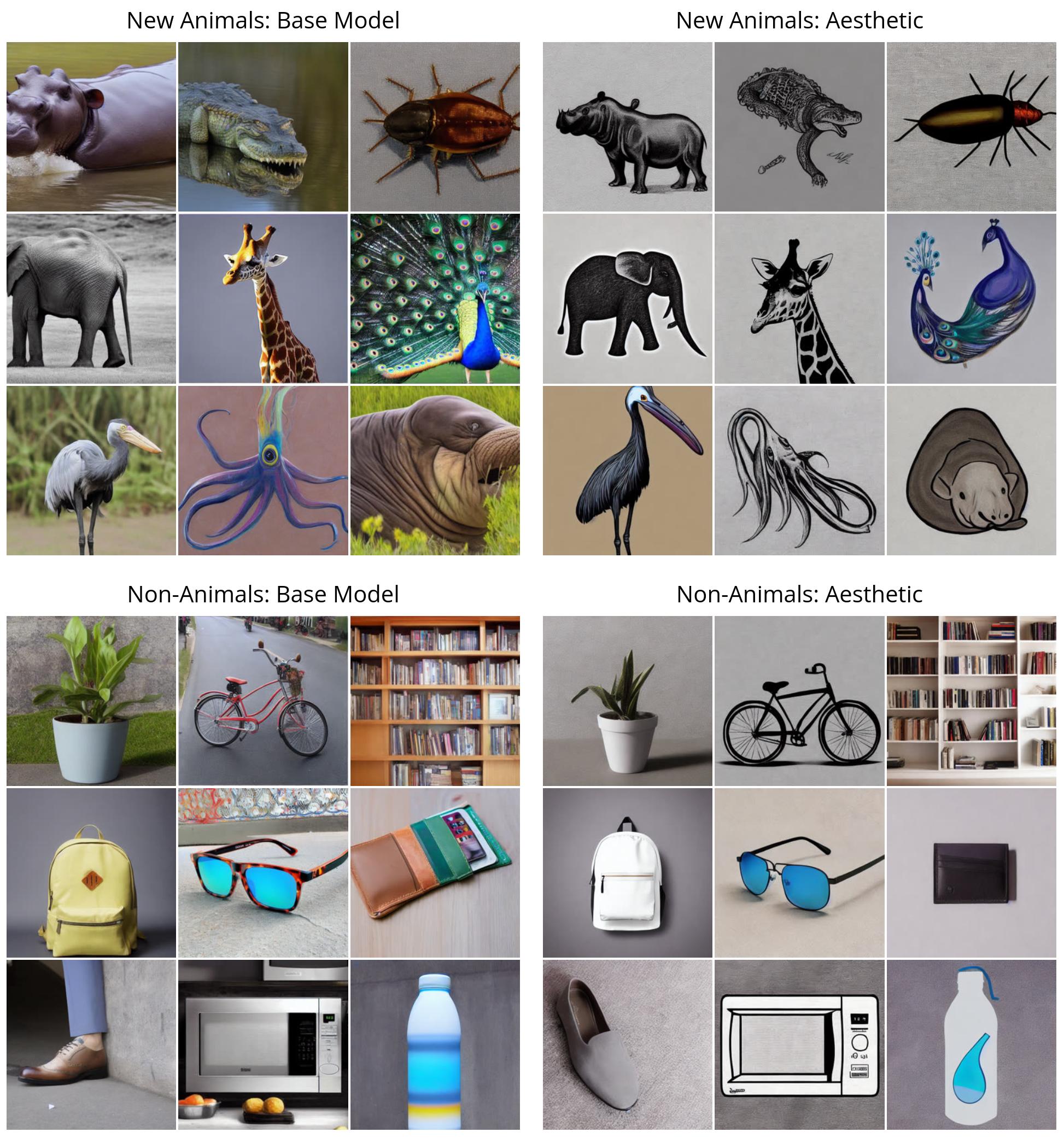

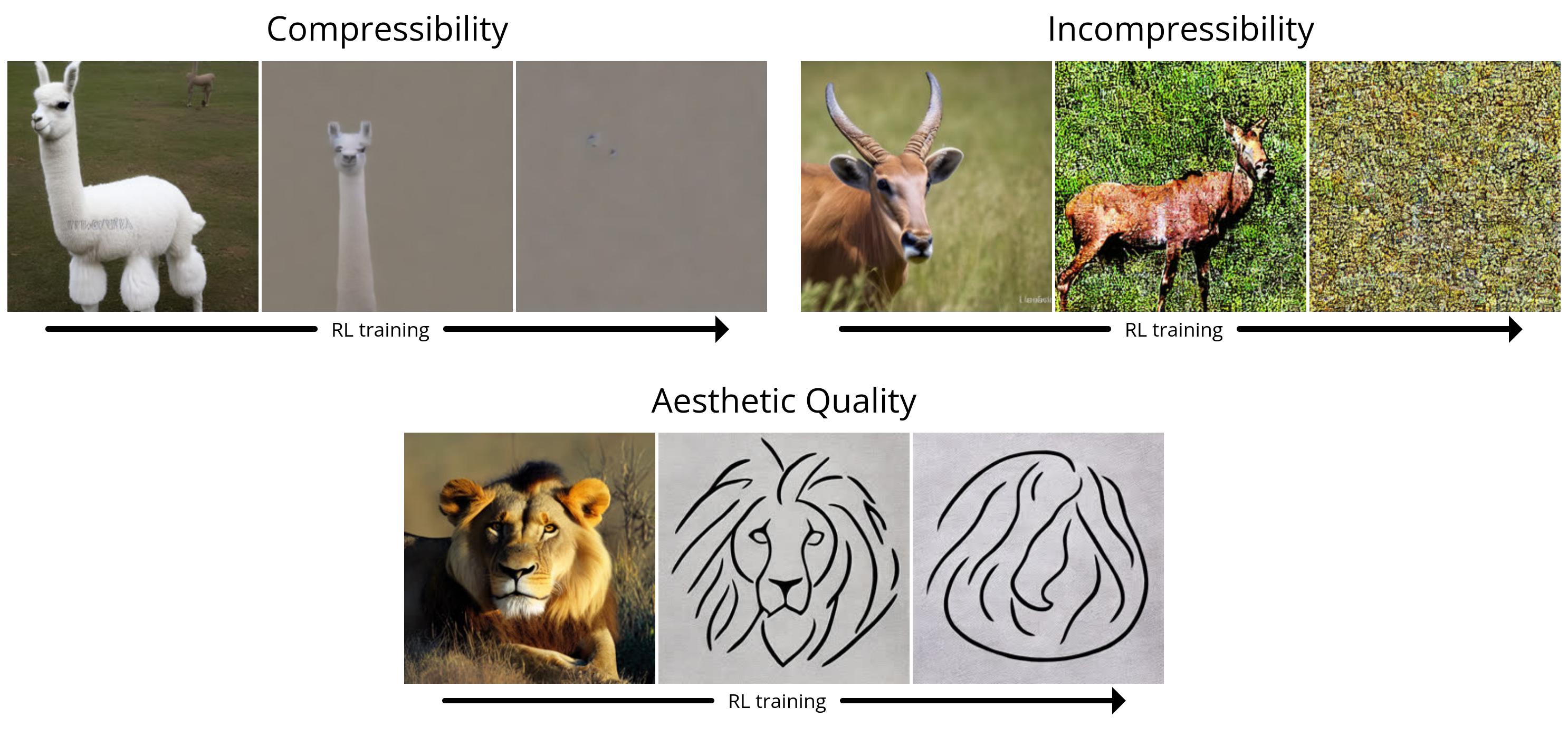

First, we illustrate the efficiency of DDPO on the straightforward rewards (compressibility, incompressibility, and aesthetic high quality). The entire photos are generated with the identical random seed. Within the prime left quadrant, we illustrate what “vanilla” Steady Diffusion generates for 9 totally different animals; the entire RL-finetuned fashions present a transparent qualitative distinction. Curiously, the aesthetic high quality mannequin (prime proper) tends in the direction of minimalist black-and-white line drawings, revealing the sorts of photos that the LAION aesthetic predictor considers “extra aesthetic”.

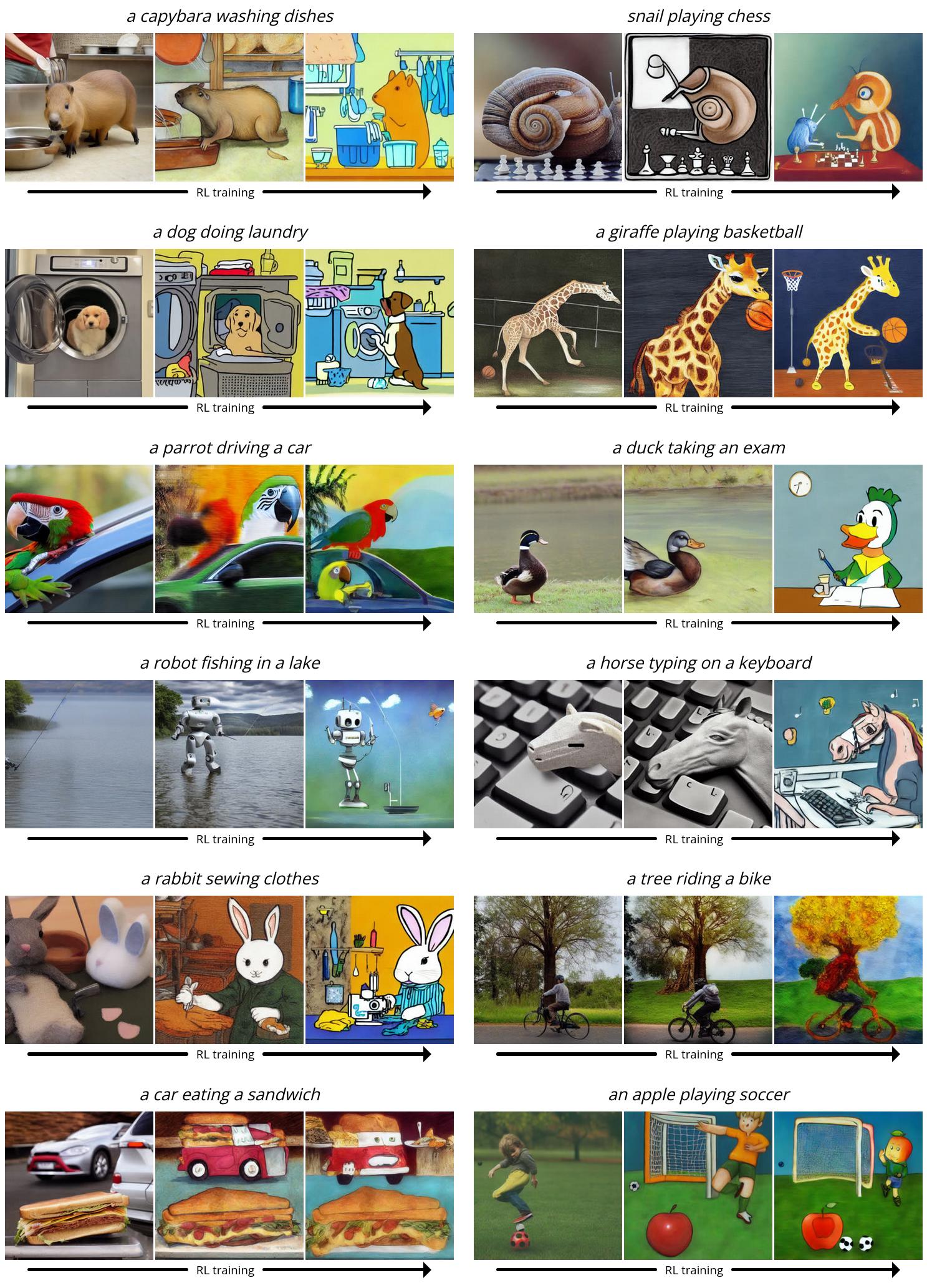

Subsequent, we display DDPO on the extra complicated prompt-image alignment process. Right here, we present a number of snapshots from the coaching course of: every collection of three photos exhibits samples for a similar immediate and random seed over time, with the primary pattern coming from vanilla Steady Diffusion. Curiously, the mannequin shifts in the direction of a extra cartoon-like type, which was not intentional. We hypothesize that it’s because animals doing human-like actions usually tend to seem in a cartoon-like type within the pretraining knowledge, so the mannequin shifts in the direction of this type to extra simply align with the immediate by leveraging what it already is aware of.

Sudden Generalization

Stunning generalization has been discovered to come up when finetuning massive language fashions with RL: for instance, fashions finetuned on instruction-following solely in English usually enhance in different languages. We discover that the identical phenomenon happens with text-to-image diffusion fashions. For instance, our aesthetic high quality mannequin was finetuned utilizing prompts that had been chosen from a listing of 45 widespread animals. We discover that it generalizes not solely to unseen animals but additionally to on a regular basis objects.

Our prompt-image alignment mannequin used the identical checklist of 45 widespread animals throughout coaching, and solely three actions. We discover that it generalizes not solely to unseen animals but additionally to unseen actions, and even novel mixtures of the 2.

Overoptimization

It’s well-known that finetuning on a reward perform, particularly a realized one, can result in reward overoptimization the place the mannequin exploits the reward perform to realize a excessive reward in a non-useful approach. Our setting isn’t any exception: in all of the duties, the mannequin ultimately destroys any significant picture content material to maximise reward.

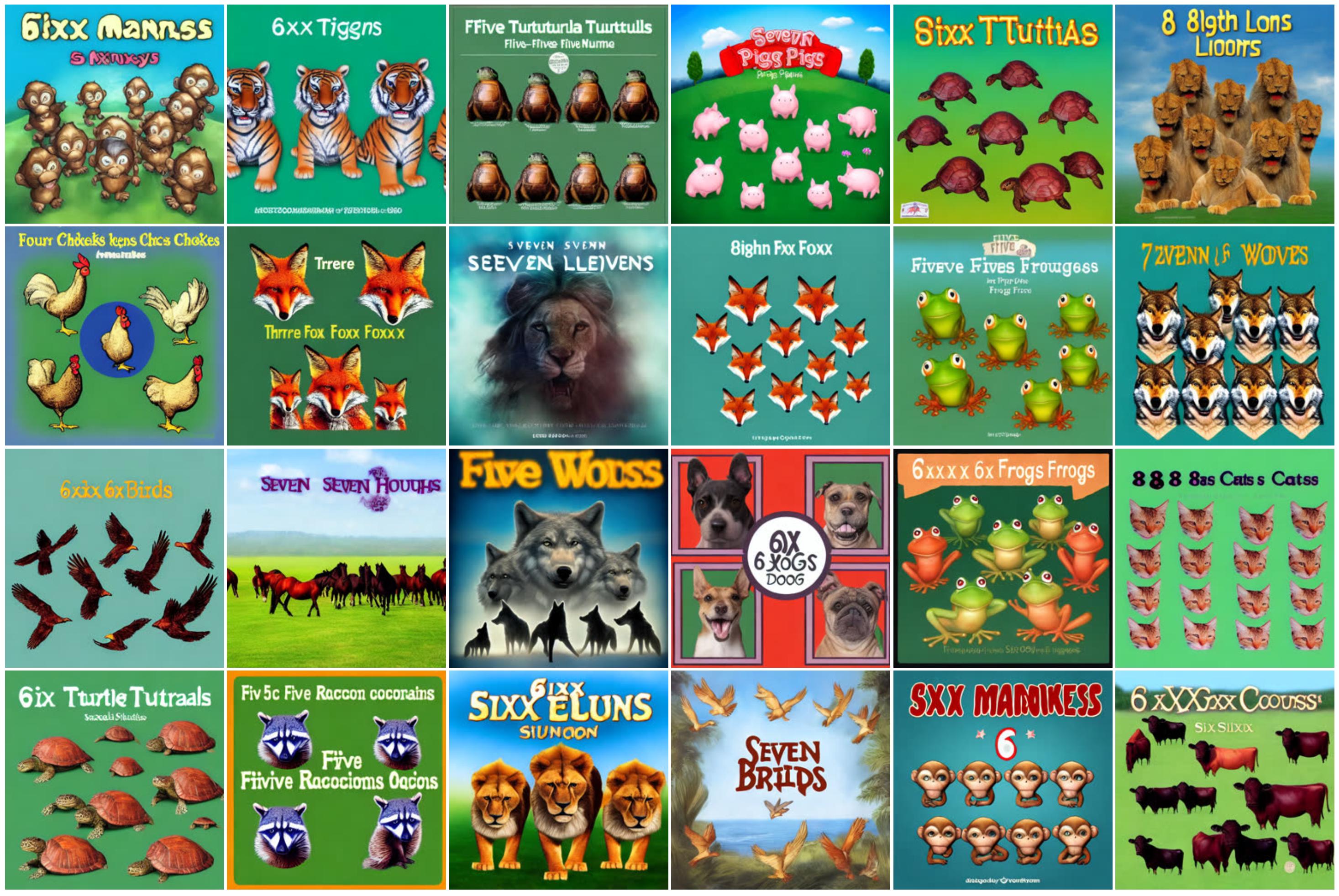

We additionally found that LLaVA is inclined to typographic assaults: when optimizing for alignment with respect to prompts of the shape “[n] animals”, DDPO was in a position to efficiently idiot LLaVA by as a substitute producing textual content loosely resembling the proper quantity.

There’s at present no general-purpose technique for stopping overoptimization, and we spotlight this downside as an essential space for future work.

Conclusion

Diffusion fashions are arduous to beat relating to producing complicated, high-dimensional outputs. Nevertheless, up to now they’ve largely been profitable in functions the place the aim is to be taught patterns from heaps and plenty of knowledge (for instance, image-caption pairs). What we’ve discovered is a option to successfully prepare diffusion fashions in a approach that goes past pattern-matching — and with out essentially requiring any coaching knowledge. The probabilities are restricted solely by the standard and creativity of your reward perform.

The way in which we used DDPO on this work is impressed by the latest successes of language mannequin finetuning. OpenAI’s GPT fashions, like Steady Diffusion, are first educated on enormous quantities of Web knowledge; they’re then finetuned with RL to supply helpful instruments like ChatGPT. Sometimes, their reward perform is realized from human preferences, however others have extra just lately discovered the way to produce highly effective chatbots utilizing reward features based mostly on AI suggestions as a substitute. In comparison with the chatbot regime, our experiments are small-scale and restricted in scope. However contemplating the big success of this “pretrain + finetune” paradigm in language modeling, it definitely looks like it’s value pursuing additional on this planet of diffusion fashions. We hope that others can construct on our work to enhance massive diffusion fashions, not only for text-to-image technology, however for a lot of thrilling functions equivalent to video technology, music technology, picture enhancing, protein synthesis, robotics, and extra.

Moreover, the “pretrain + finetune” paradigm just isn’t the one approach to make use of DDPO. So long as you may have reward perform, there’s nothing stopping you from coaching with RL from the beginning. Whereas this setting is as-yet unexplored, this can be a place the place the strengths of DDPO may actually shine. Pure RL has lengthy been utilized to all kinds of domains starting from taking part in video games to robotic manipulation to nuclear fusion to chip design. Including the highly effective expressivity of diffusion fashions to the combination has the potential to take current functions of RL to the subsequent degree — and even to find new ones.

replay

replay